Over the last decade or two, there have been multiple accounts of how publishers have negotiated the impact factors of their journals with the “Institute for Scientific Information” (ISI), both before it was bought by Thomson Reuters and after. This is commonly done by negotiating the articles in the denominator. To my knowledge, the first ones to point out that this may be going on for at least hundreds of journals were Moed and van Leeuven as early as 1995 (and with more data again in 1996). One of the first accounts to show how a single journal accomplished this feat were Baylis et al. in 1999 with their example of FASEB journal managing to convince the ISI to remove their conference abstracts from the denominator, leading to a jump in its impact factor from 0.24 in 1988 to 18.3 in 1989. Another well-documented case is that of Current Biology whose impact factor increased by 40% after acquisition by Elsevier in 2001. To my knowledge the first and so far only openly disclosed case of such negotiations was PLoS Medicine’s editorial about their negotiations with Thomson Reuters in 2006, where the negotiation range spanned 2-11 (they settled for 8.4). Obviously, such direct evidence of negotiations is exceedingly rare and usually publishers are quick to point out that they never would be ‘negotiating’ with Thomson Reuters, they would merely ask them to ‘correct’ or ‘adjust’ the impact factors of their journals to make them more accurate. Given that already Moed and van Leeuwen found that most such corrections seemed to increase the impact factor, it appears that these corrections only take place if a publisher considers their IF too low and only very rarely indeed if the IF may appear too high (and who would blame them?). Besides the old data from Moed and van Leeuwen, we have very little data as to how widespread this practice really is.

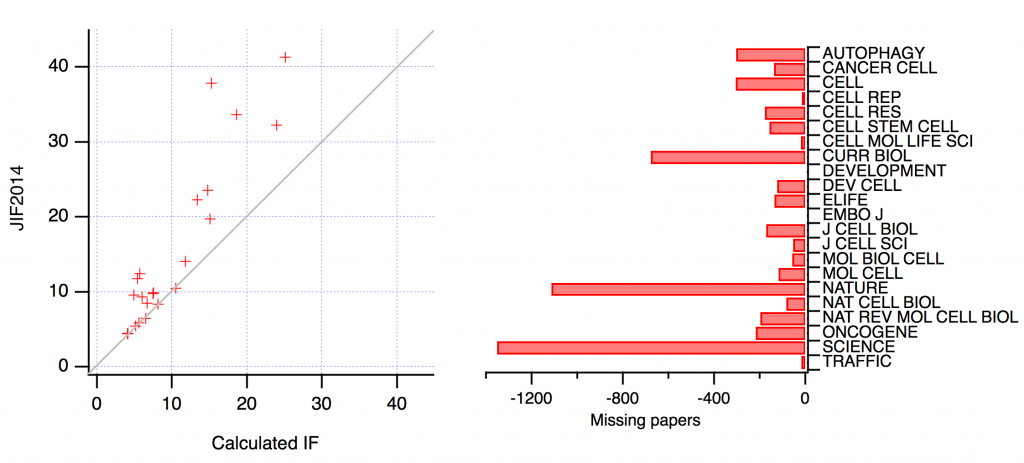

A recent analysis of 22 cell biology journals now provides additional data in line with Moed and van Leeuwen’s initial suspicion that publishers may take advantage of the possibility of such ‘corrections’ on a rather widespread basis. If any errors by Thomson Reuters’ ISI happened randomly and were corrected in an unbiased fashion, then an independent analysis of the available citation data should show that such independently calculated impact factors correlate with the published impact factor with both positive and negative errors. If, however, corrections only ever occur in the direction that increases the impact factor of the corrected journal, then the published impact factors should be higher than the independently calculated ones. The reason for such a bias should be found in missing numbers of articles in the denominator of the published impact factor. These ‘missing’ articles can nevertheless be found, as they have been published, just not counted in the denominator. Interestingly, this is exactly what Steve Royle found in his analysis (click on the image for a larger version):

On the left, you see that any deviation from the perfect correlation is always towards the larger impact factor and on the right you can see that some journals show a massive number of missing articles.

Clearly, none of this amounts to unambiguous evidence that publishers are increasing their editorial ‘front matter’ both to cite their own articles and to receive citations from outside, only to then tell Thomson Reuters to correct their records. None of this is proof that publishers routinely negotiate with the ISI to inflate their impact factors, let alone that publishers routinely try to make classification of their articles as citable or not intentionally difficult. There are numerous alternative explanations. However, personally, I find the two old Moed and Van Leeuwen papers and this new analysis, together with the commonly acknowledged issue of paper classification by the ISI just about enough to be suggestive, but I am probably biased.

Particularly noteworthy are the identities of the top three ranked journals in terms of “missing” papers – Science, Nature, and Current Biology.

Those are the ones in the sample with the most different types of articles. It’s apparently what happens when you mix general magazine and scholarly journal.