This is a post loosely based on an article appearing today in the German newspaper “Frankfurter Allgemeine Zeitung” by Axel Brennicke and me. The raw data for our analysis is available. Please do let us know if you find a mistake.

UPDATE, 09/01/2015: A commenter made me aware of data rows in the raw data we had overlooked before. In our analysis, we evaluated all non-scientific professional staff, e.g., also technical support or library staff. As I’m actually quite fond of the libraries and the technical support we have, I looked at the trends for the ‘pure’ permanent administration staff and found and increase of 17% from 2005 to 2012, while permanent scientist positions increased only by 0.04%. Taking only these two groups of employees, the ratio between scientists and administrators shrinks to 0.57 in 2005 and 0.64 in 2012, i.e., the average administrator has to support less than two permanently employed scientists. In my opinion, this would have been the better data to use, but my co-author is not quite as convinced. Either way, even focusing on ‘pure’ admin staff conveys essentially the same message as the full overall data, albeit perhaps less dramatically. This is precisely why I am an open science advocate: making your data open allows you to discover more and improve your science!

UPDATE, 16/03/2015: I was just made aware of very similar numbers from the USA, published in this article by Jon Marcus.

Noam Chomsky, writing about the Death of American Universities, recently reminded us that reforming universities using a corporate business model leads to several easy to understand consequences. The increase of the precariat of faculty without benefits or tenure, a growing layer of administration and bureaucracy, or the increase in student debt. In part, this well-known corporate strategy serves to increase labor servility. The student debt problem is particularly obvious in countries with tuition fees, especially in the US where a convincing argument has been made that the tuition system is nearing its breaking point. The decrease in tenured positions is also quite well documented (see e.g., an old post). So far, and perhaps as may have been expected, Chomsky was dead on with his assessment. But how about the administrations?

To my knowledge, nobody has so far checked if there really is any growth in university administration and bureaucracy, apart from everybody complaining about it (but see update above with link to similar numbers from the US). So Axel Brennicke and I decided to have a look at the numbers. In Germany, employment statistics can be obtained from the federal statistics registry, Destatis. We sampled data from 2005 (the year before the Excellence Initiative and the Higher Education Pact) and the latest year we were able to obtain, 2012.

The aim of the Excellence Initiative and the Higher Education pact was to improve the funding situation for universities to increase scientific competitiveness and to allow them to cope with the rising student numbers. Student numbers increased from 2005-2012 by 25% to 2.5 million. The number of full-time university employees in research and teaching also increased, but only by 18% to just over 142,000, i.e., not keeping up with student numbers. Looking at the type of contracts, we can confirm the US trend of an increasing precariat also here in Germany: in 2005, 50% of all full-time employees were on short-term contracts. This fraction increased by about one percentage point per year to now more than 58%.

University leadership and politicians often argue that such short-term employment benefits research as scientists constantly need to learn new skills in different places and the short-term contracts keep the work-force up-to-date and flexible. However, this argument also holds for administrations: fluctuating student numbers, a constantly changing funding landscape and the constant turnover of university employees ought to mandate a flexible and slim administration as well.

However, the numbers speak a different language. Every full-time university scientist is administrated by 1,28 employees in administration. Of these 182,255 administrative and professional employees, only 25% are on fixed-term contracts. The remaining 75% are virtually tenured: it is almost impossible to make them redundant, according to German laws and regulations for public employees.

Perhaps the two initiatives have improved on this situation? Maybe in 2005, the situation was even worse? Alas, the fraction of ‘tenured’ administrative personnel and professional staff was only 70% in 2005. In fact, for every permanent position that was created in research and teaching between 2005-2012, 3.7 such positions were newly created in the university administrations. This means that the average German scientist now supervises 7 more students than in 2005, while the burden on administrators and professional staff has only increased by 2.7 students.

These numbers show the real winners and losers of the increased cash flow in this past decade: the students and scientists lose out, while university administrations benefit the most from the billions. At this point, to support the work of 60,438 permanently employed scientists in German universities, it is apparently required to permanently employ 135,897 administrative and professional employees.

In fact, for every permanent position that was created in this time, ten fixed-term positions were created, exacerbating the already abysmal job prospects of German early-career scientists. This parallels the development in many other research nations such as the US or the UK. The situation is most dramatic for early-career scientists. The tip of this huge iceberg are increasing number of science scandals, such as the recent case of the young Haruko Obukata whose mentor committed suicide after the manipulations of his student became known, or Felisa Wolfe-Simon, who called her arsenic-less strain of bacteria “GFAJ1”: give Felisa a job.

As I remarked long ago, the trend to corporatize higher education parallels the rise in retractions. This rise in retractions is so rapid, that by about 2045 every published article will be accompanied by the retraction of another article, if the current trend continues. Back in 2010, Amy Bishop killed three of her colleagues at University of Alabama in a shooting spree after she was denied tenure because her publication rate was deemed insufficient. Scientists on non-permanent positions today need to publish many articles in journals ranking high in a hierarchy that is devoid of any empirical justification. On top of that, they need to devise and find funding for especially expensive experiments, as the higher the funding, the higher the so-called ‘overhead’ that the universities receive. Last year, professor Stefan Grimm committed suicide after his employer, Imperial College London told him he had 12 months to pull in 200,000 Pounds in research funding or be fired.

Today’s top scientists thus have been selected by their marketing competence to sell their work to hi-ranking journals and by their ability to come up with expensive experiments. If their research is actually reliable and sound, it is pure coincidence. There exist no incentives today for just doing reproducible science.

The trends are clear on all fronts: business as usual has become untenable. There needs to be reform and it has to be international and far-reaching. Tinkering a little here and there is not going to cut it. On the up side, a lot can be established by universities themselves, without outside assistance: administration funds can be siphoned into research/teaching positions, and the billions now tied up in subscriptions can be used to develop a digital infrastructure which comprises a reputation system that rewards scientists for doing reliable science, once all subscriptions have been canceled. This is technically and financially feasible and within a sufficiently short time-frame. But action has to be taken now and it has to be decisive. The downside? It has to be collective action.

P.S.: We have made the raw data on which we have written our article available for everyone to check. Mistakes happen and we would not want to end up like Reinhardt and Rogoff.

P.P.S.: Two related articles, one in English and one in German (PDF).

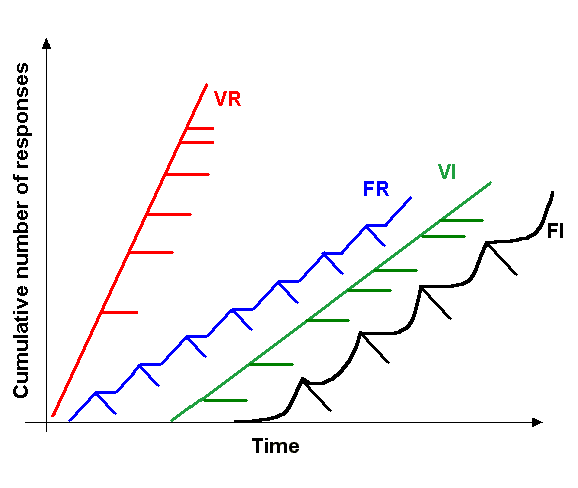

Skinner used the term “schedules of reinforcement” to describe broad categories of reward patterns which come to reliably control the behavior of his experimental animals. For instance, when he rewarded rats for pressing a lever at a given interval after the last reinforcement (i.e., fixed interval; FI), the animals would pause pressing the lever until just before the interval was over and then start pressing the lever like mad. When the number of presses are plotted cumulatively over time, this leads to a scalloped plot (black trace in Fig. 1). A similar, but clearly distinct curve can be observed when the reward comes only after a given number of lever presses (i.e., fixed ratio; FR): there, the animals stop pressing while they consume the reward and then resume repeatedly pressing the lever until the next reward is consumed (blue trace in Fig. 1). If the ratio or interval is varied, however, the animals more or less consistently press the lever at a rate given by the reward frequency (red and green traces, Fig. 1).

Fig. 1.: Different categories of response patterns to different schedules of reinforcement. VR – variable ratio; FR – fixed ratio; VI – variable interval; FI – fixed interval

In an analogous way, if you tell scientists that they need a certain number of publications to get/keep their jobs, they will double the number of articles they publish. Likewise, if you tell scientists that they need to “acquire external funding” in order to get/keep their jobs, they will increase the number of grants they write, until it’s almost all they do. This latter response class is particularly absurd and counterproductive: the number of scientists keeps increasing, at least for now. In most countries, the increase is larger than the increase in public funds, or the public funds are even decreasing. This means that the success rate of getting research funding must drop, even if every researcher would not increase the number of grants they write. However, as each scientist needs grants to keep working, the dropping success rates have the same consequence as a VR schedule with a low probability of reward (red line, Fig. 1): scientists maintain a very high level of grant writing which matches the decreasing success rate: if it’s 10%, you have to – on average – write 10 grant proposals to get one of them funded. If the success rate is down to 5%, you have to write 20 and so on. As scientists multiply and keep increasing the number of grants they write, the success rates keep dropping accordingly, making the scientists write yet more proposals. A vicious cycle if ever there was one. Skinner couldn’t have devised a more pernicious schedule himself.

That may seem absurd enough, but it is only half of the story. As research “income is crucial to the development of world-class research“, not only universities are ranked according to the volume of government funds they attract, but also (and likely consequently) the scientists within each institution are ranked according to the funds they attract. In Germany, for example, how much funds a scientist can attract, can have direct effects on their salary. Thus, if there is a cheaper and a more expensive way to do the same experiment, it is in the scientist’s own best interest to chose the more expensive experiment. As it stands today, scientists should write as many grant proposals as possible and make them as expensive as possible. In other words: the successful scientist of today wastes not only time, but also tax funds. If you factor in that in order to get your grants funded, you also must publish in the so-called ‘top-journals’ where the key factor is getting past the professional editor, the most successful scientists of this day must be great salespersons: they need to sell grant agencies their wasteful research proposals and editors their unreliable papers. If we are lucky, some of them might also be great scientists, as well.

I recently was sent a report from a university-wide working group on the publishing habits within the Freie Universität Berlin. I don’t think this document is available online, but I think I’m not doing anything illegal if I publish some of the survey results here. The working group polled all faculty members of the university on various questions concerning scholarly publishing. One of the questions was on the importance of Thomson Reuters’ Impact Factor for the respondents. Here the results:

| n= | none/low | middle/high | |

| Discipline | 207 | 43% | 57% |

| Vetenary medicine | 17 | 0% | 100% |

| Biology, Chemistry, Pharmacy | 31 | 3% | 97% |

| Economics | 17 | 12% | 88% |

| Physics | 10 | 20% | 80% |

| Political and social studies | 17 | 24% | 76% |

| Didactics and psychology | 14 | 36% | 64% |

| Geosciences | 11 | 36% | 64% |

| Mathematics and computer sciences | 22 | 50% | 50% |

| Philosophy and humanities | 25 | 88% | 12% |

| Law | 11 | 91% | 9% |

| History and cultural studies | 24 | 92% | 8% |

It is quite clear that in all disciplines but the humanities, the Impact Factor is considered to be influential. In particular the biomedical field is near unanimous in its submission under the Impact Factor, with physics, social and geosciences trailing.

Interestingly, a majority of respondents in all disciplines lament the high importance of bibliometric indicators in general and the Impact Factor in particular. This seems puzzling, given that it’s the faculty that make these numbers important, at least here in Germany.

The report emphasizes both the inconsequential (and unscientific) behavior of their own faculty as well as the detrimental consequences of the collusion between the big database companies, Thomson Reuters and Reed Elsevier, for the scientific community in general. The working group report specifically recommends the university to drop the use bibliometric indicators in internal evaluations.

Footnote: The report also emphasizes that the importance of the Open Access debate is exaggerated in the public discussion and is not represented in the faculty responses, where Open Access plays a minor role and which shows that publication traditions are fairly constant over several generations of scholars and change only little.

Arguably, there is little that could be more decisive for the career of a scientist than publishing a paper in one of the most high-profile journals such as Nature or Science. After all, in this competitive and highly specialized days, where a scientist is published all too often is more important than what they have published. Thus, the journal hierarchy that determines who will stay in science and who will have to leave is among the most critical pieces of infrastructure for the scientific community – after all, what could be more important than caring for the next generation of scientists? Consequently, it receives quite thorough scrutiny from scientists (bibliometricians, scientometricians) and there is a large variety of journals which specialize in such investigations and studies. Thanks to the work of these colleagues, we now have quite a large body of empirical work surrounding journal rank and its consequences for the scientific community. This evidence points to Nature, Science and other high profile journals, rather than publishing the ‘best’ science, actually publishing the methodologically most unreliable science. One of several unintended consequences of this flawed journal hierarchy is that the highest ranking journals have a higher rate of retraction than the lower ranking journals. The data are also quite clear that this disproportional rate of retractions is largely (but not exclusively) due to the flawed methodology of the paper published there, and only in small (but significant) portion due to the increased attention high-profile journals are attracting.

Perhaps not surprisingly, both Nature and Science actively ignore and disregard the available evidence in favor of less damning speculations and anecdotes. Given that both journals were made aware of the evidence as early as 2012, one could be forgiven for now starting to speculate that the journals are attempting to safeguard their unjustified status by suppressing dissemination of the data. First, after rejecting the publication of the self-incriminating data, Science Magazine published a flawed attempt to discredit lower ranking journals, concluding, implicitly, that one better rely more on the higher ranking, established journals. Then, barely a fortnight later, Nature Magazine (which also rejected publication of actual data on the matter) followed suit and publishes an opinion piece on how scientists feel about journal rank. Today, completing the triad, Nature publishes something like a storify from different tweets, citing the fact that higher ranking journals have higher retraction rates and speculating if the higher rates may stem from increased attention.

Needless to say, we have maybe not entirely conclusive, but pretty decent empirical data showing that there are several factors contributing to this strong correlation and that increased attention to higher ranking journals is likely to be one of these factors, but probably a minor, if not the least important one. Instead, the data suggest that the methodological flaws of the papers in high-profile journals, in conjunction with the incentives to publish eye-catching results are much stronger factors driving the correlation. The consistent disregard for the empirical data suggesting that the current status of high-profile journals is entirely unjustified, could raise the suspicion that this last news piece in Nature Magazine may be part of a fledgling publisher strategy to divert attention away from the data in order to protect the status quo.

However, not only due to Hanlon’s Razor, one has to consider the more likely alternative that none of the various authors or editors behind the three articles actually is aware of the existing data. For one, none of these authors/editors was involved with handling the manuscript in which we reviewed the data on journal rank. Second, the first article, Bohannon’s sting, was so obviously flawed, one wonders if the author is familiar with any empirical work at all, let alone the pertinent literature. Third, the evidence points to the editorial process at high-ranking journals selecting flawed studies for publication – it appears that only very few, if any, of the editors at these journals are any good at what they do. Given these three reasons, one can only conclude that this string of three entirely misleading articles can only be due to “stupidity” and not to “malice”, to use Hanlon’s words.

UPDATE: I had no idea how wrong I was, until I saw this tweet from Jon Tennant:

@brembs @CorieLok I see your comment. I did push your article when asked for comments, but this didn't make it into the article

— Jon Tennant (@Protohedgehog) September 18, 2014

In other words, the author of the article (which I assume must be Cori Lok) did know about the data we have available and nevertheless pushed the anecdote. Given this new information, it may be time to reject Hanlon’s razor and exclude “stupidity” in favor of “malice”?

Last week, Elizabeth Pennisi asked me to comment on the recent paper from Schreiweis et al. entitled “Humanized FoxP2 accelerates learning by enhancing transitions from declarative to procedural performance”. Since I don’t know how much, if anything, of my answers to her questions will end up in her article, I thought I might expand my answer into a post about this very interesting work.

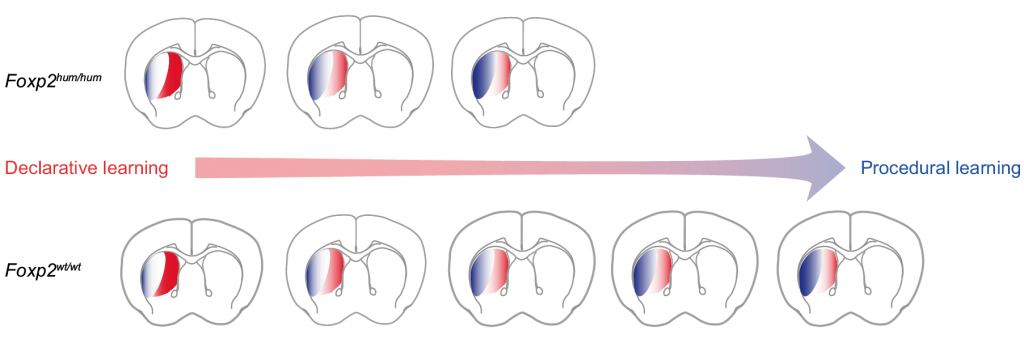

As the title implies, Schreiweis et al. have tested transgenic mice in which the mouse version of the language-related gene FoxP2 was replaced with the human version. They found that the timing of when repeated behaviors become stereotypic is altered, such that the behaviors become stereotypic earlier in the humanized mice than in the unaltered animals.

Importantly, this was only observed in tasks where two learning systems were engaged simultaneously. In the literature, there are several accounts of how to label these learning systems. In vertebrate learning they are often referred to as procedural vs. declarative (also in Schreiweis et al.), in animal navigation many authors refer to them by allocentric vs. egocentric, and in Drosophila fruit flies we have coined the terms world- vs. self-learning. The gist behind these word pairs is that brains as different as those from insects and mammals seem to adhere to a common functional organization that makes a very fundamental distinction between self and non-self on various levels. Of relevance to the current research is that external cues are treated by different brain regions and learning of relationships among external cues is mediated by different molecular processes than the internal processes controlling behavior. Hence our distinction between world- and self-learning.

In situations where both world- and self-learning can occur simultaneously, world-learning commonly dominates in the first phases of training, while self-learning kicks in later. There is converging evidence from vertebrates and invertebrates suggesting that this staggering is probably accomplished by inhibitory connections from circuits engaged in world-learning slowing down the circuits involved in self-learning. Thus, it is this negotiation between self- and world-learning which provides us with time to practice our skills before they become automatic. One may also say that this negotiation is the reason why it takes time (and how much time it takes) to form habits. I’ve written a longer account of this negotiation on occasion of the poster publication of some of the Schreiweis et al. work in 2011.

The recently published work by Schreiweis et al. now contains both molecular genetic and physiological results in addition to the behavioral data. It adds weight to the so-called ‘motor-learning hypothesis’ that came up some time around 2006/7 or thereabout. This hypothesis posits that FoxP2 is mainly involved in the motor, or speech component of language, i.e., learning to control the muscles in the lips, tongue, voice chords, etc. in order to articulate syllables and words.The movements of these organs have to become stereotypic in order to reliably produce understandable language and the main experimental paradigms for this stereotypization of behavior (independent of language) have been procedural learning and habit formation. This work provides further evidence that indeed FoxP2 is an important component of the learning process that leads to automatic, stereotypic behavior.

In particular, it suggests that FoxP2 is involved in the control of the process of stereotypization, i.e., at what point the behavior shifts from being flexible, to becoming more rigid. Until this work, the evidence from vertebrates and invertebrates has pointed to FoxP genes to be involved in the automatization of behavior. Now, this evidence is extended to also – at least in mammals – include the negotiation process, which I don’t think anybody had on the radar thus far.

One of the most interesting mechanistic questions that derive from the fact that these mice only showed an effect on the negotiation between the two learning systems (and not on the individual systems when isolated), is how this negotiation takes place. Again, converging evidence from invertebrates and vertebrates points towards inhibitory connections from the world-learning processes keeping a break on the self-learning processes. Is FoxP directly affecting these inhibitory connections, or is it just subtly increasing the relative strength of the procedural/self mechanism, such that it cannot be detected in individual experiments where the components have been isolated, but only in experiments where the negotiation actually takes place? The physiological results by Schreiweis et al. point towards the latter: induction of long term depression (LTD) in the dorsolateral, but not the dorsomedial striatum is enhanced in the humanized mice – and the dorsolateral striatum is the region thought to be involved in self-learning, while some world-learning processes have been localized to the dorsomedial striatum.

Mice with the humanized version of FoxP2 form habits earlier and this might be due to earlier formation of LTD in the dorsolateral striatum. (Fig. S7 in Schreiweis et al.)

The generation of these humanized mice was a particularly cool aspect of the work. Compared to other, more common transgenic manipulations (e.g. knock-outs), this humanization of FoxP2 is a rather sophisticated and subtle alteration with rather nuanced but nevertheless very exciting consequences. Many of the more drastic FoxP manipulations are homozygous lethal and since there is evidence for positive selection of the human variant, it was very straightforward to try and see what the human variant would do in an organism that doesn’t normally express this version. Moreover, such a subtle alteration may uncover more subtle roles of FoxP than the cruder manipulations have been able to. In fact, the most specific behavioral consequences of any gene manipulation have commonly been the most subtle of genetic manipulations. Therefore, the scientific value of this manipulation extends far beyond the fact that it mimics the human gene.

One needs to keep in mind, though, that it was only the structure of the protein that was humanized, the regulatory region of the gene was not altered, i.e., the putative expression pattern of the humanized FoxP2 gene is still that of the mouse version (and I don’t know how different the human regulatory region is from the mouse one – in fact, I’m not sure if we have full knowledge about the regulatory region of FoxP2, yet).

Thus, it is quite amazing that these mice showed any difference at all to WT mice, subtle as these differences may be perceived to be.

On a wider perspective, this work adds to our growing understanding of the relation between learning and language acquisition. Schreiweis et al.’s results fall very nicely within a string of recent work suggesting that a major component of language acquisition is based on a form of learning called operant conditioning. The debate about the relevance of this form of learning for language is at the core of the idea history of neuroscience and psychology. In 1957, BF Skinner published “Verbal Behavior” in which he made the sweeping claim that language was essentially acquired via operant learning.

Two years later, Noam Chomsky took Skinner to task in what would become one of the cornerstones in the fall of behaviorism and the rise of cognitive neuroscience and with it, of course, Chomsky’s rise to fame as one of the US’ leading intellectuals.

If one can summarize Chomsky’s massive (32 pages) book review in a single sentence, it might be: “it may look like operant learning, but you don’t have any evidence”. Instead, Chomsky went on to famously propose that we all have inborn language acquisition devices as well as ‘universal grammar’. Of course, Chomsky also did not provide any evidence, either.In the absence of any evidence on either side, Chomsky’s outstanding rhetorical skills prevailed and changed nearly all of psychology and neuroscience for the coming 5 decades until this day (I’m simplifying and exaggerating somewhat, for the sake of brevity and argument).

In the last few years, evidence has accumulated not only that the concept of universal grammar likely is untenable, but also that indeed operant learning (and especially the form of operant learning called motor learning) is an important if not crucial component of language acquisition, in particular the speech component of language. For instance, FoxP manipulations in flies specifically affect operant self-learning, but not other forms of learning. Thus, the currently available evidence points towards an ancestral FoxP function in self-learning, a function that is not only conserved in humans, but one that has been further honed by evolution to allow for the acquisition of language.This work by Schreiweis et al. falls neatly within this last string of publications tilting the outcome of this long-standing debate more and more in Skinner’s favor.

As we don’t know the exact mechanism by which the negotiation between self- and world-learning processes takes place, one can only speculate what advantage the current human version of the FoxP2 gene might have conferred. One interesting aspect here might be that it may have been critical for the evolution of language to speed up the stereotypization of certain orofacial movements during the first attempts to articulate.

A further, even more tentative speculation might be that this speeding up was adaptive, because it allowed more effective communication between parents and their infants, at an earlier time point in the development of the child, when it was beneficial for the vulnerable infant to convey its status in a more nuanced way than just crying to its caregivers. In this way, children which were able to speak earlier may have had a survival advantage over those that spoke later.But then again, at this time point, such speculations are merely just so stories.

On a personal note, being so used to data mostly falsifying my hypotheses over the last 20 years, it makes me quite nervous that now in this particular research field, everything seems to fit so well together. If something fits so well to one’s ideas, one should be especially cautious and (beware of confirmation bias!), think hard to make doubly sure that the next experiments are designed such that they can easily yield results that contradict the current hypothesis, more so than usual.

And on a side note, it is quite an irony that three years ago a reviewer (for the same journal that now published his work, PNAS) heavily criticized our essentially analogous conclusions. Now, three years later, PNAS is finally ready to publish work that supports the motor hypothesis.

Schreiweis, C., Bornschein, U., Burguiere, E., Kerimoglu, C., Schreiter, S., Dannemann, M., Goyal, S., Rea, E., French, C., Puliyadi, R., Groszer, M., Fisher, S., Mundry, R., Winter, C., Hevers, W., Paabo, S., Enard, W., & Graybiel, A. (2014). Humanized Foxp2 accelerates learning by enhancing transitions from declarative to procedural performance Proceedings of the National Academy of Sciences DOI: 10.1073/pnas.1414542111

This is an easy calculation: for each subscription article, we pay on average US$5000. A publicly accessible article in one of SciELO’s 900 journals costs only US$90 on average. Subtracting about 35% in publisher profits, the remaining difference between legacy and SciELO costs amount to US$3160 per article. With paywalls being the only major difference between legacy and SciELO publishing (after all, writing and peer-review is done for free by researchers for both operations), it is straightforward to conclude that about US$3000 are going towards making each article more difficult to access, than if we published it on our personal webpage. Now that is what I’d call obscene.

Just to break the costs of legacy publishing down in detail:

| Publisher profits | 1750 |

| Paywalls | 3160 |

| Actual costs of typesetting, hosting, archiving, etc. | 90 |

| Sum | 5000 |

Today, our most recent paper got published, before traditional peer-review, at F1000 Research. The research is about how nominally identical fly stocks can behave completely differently even if tested by the same person in the same lab in the same test. In our case, the most commonly used wild type fly strain is called “Canton S” or CS for short (interestingly, there is no ‘Canton S’ page on Wikipedia). Virtually every Drosophila lab has a Canton S stock in their inventory, but of course, it can have been decades since these flies have seen any other Canton S flies from a different place. In evolutionary terms one would call this “reproductive isolation” meaning that there is no gene flow between the different Canton S stocks in the different labs around the world, even though they all originated from the same stock at one point and are all referenced as CS in the literature.

Reproductive isolation is one of several factors which are required for speciation. Therefore, we always kept the Canton S stocks we have received from different labs separate in our lab, to make sure we always have the appropriate wild type strain for any genetically manipulated strain we might get from that lab. In total, we had five different Canton S strains which we tested in Buridan’s paradigm:

To our amazement, it turned out that there were considerable differences between these nominally identical fly strains. In fact, the differences were large enough to have classified some of the strains ‘mutants’. As we have some knowledge about the ancestry and pedigree of each strain, we speculate that what created the differences between the strains to begin with are founder effects either when a sample was taken from one lab and transferred to the next, or during the history of the strain in an individual laboratory. It seems unlikely that there has been any significant adaptation to the particular laboratory environment, but at this point this is difficult to rule out conclusively.

This phenomenon has been observed in other model systems before and it is not quite clear how to solve it, as the logistics of developing a global “mother of all wild type stocks” are a nightmare. We felt that this issue was not considered enough in the Drosophila community and the Buridan results provide for an excellent case study. Especially in a time when reproducibility is on everybody’s agenda, it is crucial to know what can happen when trying to replicate a phenomenon that was observed in one Canton S strain elsewhere with the Canton S strain available in one’s own lab.

As replicability is also one of the issues of this paper, we decided to make the entire process of the paper as transparent as possible: not only is the paper published open access, it is also published before traditional peer-review. The ensuing peer-review will then be made open such that everyone can see what was changed in the process. The different versions of the paper will also remain accessible. Only after our paper has passed regular peer-review, will it be listed in the major indices, such as PubMed.

Above and beyond the openness of the publication process, we also decided to pioneer a technology we should have developed many years ago. In this paper, as a proof of concept, one of the figures isn’t provided by us, but we have merely sent our data as well as our R code to the publisher and the figure is generated on the fly. In the future, this will save us a tremendous amount of work: we are already setting up our lab such that our data is automatically published and accessible. The code is either open source R code from others, or made open source by us as we develop it. Hence, at the time of publication, all we need to do is write the paper and then submit just the text with the links to the data and the code together with some instructions on how to call the code to generate the figures. No more fiddling with figures ever again, once this becomes the norm – just do your experiments, write the paper and hit ‘submit’.

Another advantage of that method is that not only does it save us time and effort, it also means that reviewers and other readers only need to double click the figure to modify the code and, e.g. look at another aspect of the data. In this version we just let the user decide on different ways to plot the data, but it shows the principle behind the implementation.

In order to both broaden the database for the phenomenon studied here, and showcase the power of the technology, we are also inviting other labs to contribute their Canton S Buridan data to see how it compares to the data we have. As of now, we show data from only one additional lab in a static figure, but in future versions of the paper, we will have a dynamic figure that gets updated as new data gets uploaded by users.

I’m not deluding myself that our little paper will have much of an impact either scientifically or technologically. Of course we’d all be more than delighted if it would, but at the very least, we’re showing that even with very limited resources and some creativity, you can accomplish something that, extrapolated to a larger scale, would be transformative.

![]() This is the story behind our work on the function of the FoxP gene in the fruit fly Drosophila (more background info). As so many good things, it started with beer. Troy Zars and I were having a beer on one of the ICN evenings, I think it was in Vancouver in 2007. I had recently learned about the conserved role of FoxP2 in songbirds, out of one of the labs in Berlin, where I was based at the time. As already Skinner had proposed that language learning was an operant conditioning process and as song learning in birds was also often characterized as a form of operant learning, I wondered aloud how cool it would be if flies had such a gene and we could test them in our operant learning experiments as well. This would really suggest a possibility to unify vocal learning and operant learning in a single biochemical pathway.

This is the story behind our work on the function of the FoxP gene in the fruit fly Drosophila (more background info). As so many good things, it started with beer. Troy Zars and I were having a beer on one of the ICN evenings, I think it was in Vancouver in 2007. I had recently learned about the conserved role of FoxP2 in songbirds, out of one of the labs in Berlin, where I was based at the time. As already Skinner had proposed that language learning was an operant conditioning process and as song learning in birds was also often characterized as a form of operant learning, I wondered aloud how cool it would be if flies had such a gene and we could test them in our operant learning experiments as well. This would really suggest a possibility to unify vocal learning and operant learning in a single biochemical pathway.

“Shhh, don’t tell anybody, I have them!”, Troy replied.

It turned out that at the time, the FoxP-related gene in flies had not been annotated in the genome yet. Troy had performed the database sequence search and performed some preliminary molecular experiments to make sure he really had the dFoxP gene. He even noticed that one sequence just downstream of the gene was in fact the last exon of the gene and that the automated curation process had missed this detail. He had ordered three fly lines each with one insertion mutation in or near this last exon and replaced the rest of the genome with the background of a control strain in his lab, “Canton S”. He even had done some preliminary behavioral tests on the courtship of these ‘cantonized’ lines.

We agreed that he would send me the lines and I would test them in our operant conditioning experiments. When I received the lines I was excited and started testing them as soon as they hatched. The first day was a disappointment: I had randomly chosen one of the three lines and tested a few of them along with Troy’s Canton S control flies. Both groups learned just fine. I was almost going to give this up as a beautiful hypothesis slain by an ugly fact, when I reminded myself that one should not rest one’s conclusions on a single day of testing. So I decided to test them at least for the rest of the week and then see what happened. On each of the following days, learning scores were consistently zero, such that, by the end of the week, the combined score of all the animals didn’t look all that good any more. The final results, several weeks later, can now be seen in Fig. 3 of our paper.

In order to find out what was going on with FoxP gene expression in these mutants, FoxP2 specialist Constance Scharff in the neighboring department of behavioral biology in Berlin teamed up with Jochen Pflüger in our department to apply for some funding for this project. The results of this fruitful collaboration can be seen in Figs. 2 and 5c. It was then graduate student, now postdoc Ezequiel Mendoza who did all the molecular biology.

Because FoxP genes are transcription factors regulating the expression of other genes during brain development, I was curious if the brain structure of these flies were any different from control flies. Jürgen Rybak was a specialist on insect brain anatomy and had recently left the department in Berlin. I gave him two vials of flies and asked him if he could quantify the main brain regions ‘on the side’ of his regular day job at the Max Planck Institute. Jürgen worked tirelessly and after many months of slogging came up with some very subtle results, now depicted in Fig. 6. To this day, we’re still unsure what these data really ought to tell us, other than that FoxP also in flies is involved in brain structure and development. This figure, btw, has two examples of fly brains that you would be able to rotate and zoom in and out of in 3D, if PLoS would be able to support 3D PDF files. As they don’t, you have to dig into the supplemental information, go to Fig. S3 there and open the PDF file. Then, if you click on any or both of the brains, you can use the 3D functionality we have embedded there.

Another parallel between vocal learning and operant conditioning is the observation that training/practicing for extended periods of time leads to a stereotypization or automatization of the behavior trained. In songbirds, this is called “crystallization” of the song, in operant conditioning it is called habit formation. Flies show this sort of habit formation as well, but we had no idea if it was the same process as the much quicker form of learning we had tested before, or if this was something that looked similar on the behavioral level, but the neurobiology was quite distinct. So the postdoc in our lab at the time, Julien Colomb, set out to not only replicate my own results with the FoxP mutants, but to also test the much trickier habit formation experiment in the mutant flies, using his own, new machine. He found that indeed these flies were also deficient in habit formation, replicating not only my results, but also strongly suggesting that our form of operant learning (which we have termed “operant self-learning”) and habit formation are likely biochemically the same process.

Because having just one mutant allele (the other two lines did not fly well enough for our experiments) is not enough evidence to conclusively tie these phenotypes to the FoxP gene, we took advantage of the fact that the last exon, where the insertion mutation resided, was initially listed as a separate gene in the databases. The method of choice was to attempt to knock-down dFoxP expression using RNAi. We wanted to mimick the original mutation as specifically as possible, so we wanted only to knock down the dFoxP isoforms which included the last exon. Usually, this is not possible, without designing your own transgenic constructs. However, because the last exon was its own gene in the databases, there was a line that contained an RNAi construct for just the last exon. So I ordered that line, crossed it to another line that would express the RNAi construct specifically in all neurons and tested the flies. This was when I started to get very, very nervous: the flies with the RNAi-targeted dFoxP also did not learn in operant self-learning! In most of my research career, things have turned out the opposite of what I had expected, in almost all of my experiments. Did I do something, subconsciously, to bias my results? After all, this was now the second experiment in a row that turned out just as one would dream of! I couldn’t find anything wrong, measured yet more flies in different crosses just to be sure, but the result remained the same. As long as nobody else finds out what went wrong, I have to accept the data and acknowledge that one of my hypotheses turned out to be correct for once after all. That’s a very weird and strangely disconcerting feeling.

Everything had been smooth sailing so far, so clearly, there had to be something that would put a spoke in our wheels. We decided to submit the manuscript to PNAS just before the winter break in 2011, on December 21, with the title “Drosophila FoxP is necessary for operant self-learning”. One reviewer liked it, but the other summarized their review by

the data are made to bear the weight of an elaborate hypothesis, and they are literally crushed by it, like a tiny matchstick house beneath a bowling ball.

The reviewer appeared particularly miffed by something he hadn’t heard of before:

to the best of my knowledge the distinction between operant self-learning and operant world-learning is one that is not widely acknowledged in the learning and memory community. Indeed, the senior author and his colleagues of this manuscript may be the only people in the world who hold that there is such a distinction.

We dared to submit something that wasn’t yet part of the wider learning and memory community! How preposterous! It appears that this reviewer was not very keen on new discoveries – only processes and observations “widely acknowledged by the community” should be published. Novel, groundbreaking or controversial findings need not be submitted. A very strange perspective to take for a scientist indeed, especially since at the point of submission we already had two peer-reviewed papers with this distinction published. The reviewer struck the death blow to the paper:

If one strips away the elaborate theoretical superstructure of this paper, for which the evidence is, I believe, shaky at best, what is one left with? Basically, the authors have shown that a FoxP fly mutant performs abnormally on one operant conditioning task, but normally on another. This is well and good, but not, to my mind, sufficient for a PNAS paper.

And of course, the classic smack down that will kill any paper, but at least to my mind is essentially unethical: ask for virtually every single potential experiment to be made:

To accept the authors’ ideas, we would have to know whether or not FoxP mutant flies are deficient in other forms of learning. In other words, what is the evidence that the learning abnormalities in these flies are confined to “self-learning” motor tasks? Remarkably, the authors do not test their mutants on any of the other learning tasks available for Drosophila, among which are olfactory conditioning, habituation and conditioning of proboscis extension, and nociceptive sensitization. Knowing the full range of learning dysfunctions of FoxP mutants would help clarify the important issue of whether or not this class of genes is devoted to operant self-learning as the authors believe.

Not one, not two, but 4 different behavioral experiments is what we should do, topped up by “the full range of learning dysfunctions”. If any reviewer for any of the papers I’m handling as an editor ever should request anything like that from one of the authors, I will definitely report the person to his department for unethical behavior: asking for every experiment on the planet is just something that gives away bias. But that’s not even enough. On top of doing every single behavioral learning experiment the world knows for flies, we should also do a full-scale molecular genetic interaction study:

It would also be important to know whether or not FoxP mutants have defects in PKC signaling. Does Drosophila FoxP even have a PKC binding site? Can overexpression of PKC isoforms in flies rescue the learning deficits of the FoxP mutants?

Asking for essentially a decade or two of experiments in a review for a single paper is clearly unethical in my books and I notified PNAS of that. Obviously, other than acknowledgment of receipt, I never heard of them again. I guess there may be something to the talk and gossip about PNAS…

We decided to instead submit our work to PLoS One, after we tried and tackled the more reasonable suggestions by the other reviewer. In April 2012, we’re ready and submitted the revised manuscript. In May, we got a ‘major revisions’ notification, asking us to essentially replace the semi-quantitative PCR with quantitative PCR when we test for the effectiveness of the RNAi procedure. I thought this was a good and reasonable suggestion as I had started to become suspicious that the regular PCR was quite liable to false bands: both missing and appearing bands.

It took us more than a year to get the qPCR data, evaluate them and digest them: it had turned out that the RNAi had not knocked down the dFoxP mRNA in any way we would be able to detect. Thus, it was possible that the behavioral phenotype was due to another gene, serendipitously affected by the RNAi construct (a so-called ‘off-target effect’). This left us with just one allele and an inconclusive RNAi result. I researched the literature as I had recently been teaching the RNAi method to undergraduates and had taught that under some conditions, the mRNA is not degraded, but sequestered. Now what were these conditions again? In brief, if the match between RNAi construct and target region of the gene is not 100%, the mRNA is rather sequestered, than chopped up. So we (i.e., Ezequiel) cloned and sequenced this region for all of our lines and did indeed find several mismatches in the target region. Lucky to have an explanation for a phenotype without mRNA knockdown, but nervous that it wouldn’t be sufficient, we re-submitted in August 2013.

As we feared, the reviewers did not find our explanations sufficient and again sent us the manuscript back. In the meantime, I had moved to Regensburg from Berlin and discussed the paper with colleagues at lunch. One of them, José Botella-Munoz, suggested I try a classic genetic experiment from the 20th century: cross the mutants and the controls over a deficiency that spans the dFoxP locus! I ordered the flies, crossed and tested them and to our relief, the results confirmed that the mutation in the FoxP genes was the most likely culprit for the learning phenotype. Find these results now in Fig. 4 of the paper. In addition to these results, we also changed the title to “Drosophila FoxP mutants are deficient in operant self-learning”, as we still can’t fully rely on the RNAi data.

During the revision process, what all of us secretly feared happened: another behavioral fly FoxP paper was published. These authors had not done any learning experiments, but had found an involvement of FoxP with motor problems of the flies. However, they relied heavily on the RNAi method, but without using qPCR, only the regular PCR which was the reason our manuscript was initially rejected at PLoS. So that was something of a shock, but not too bad, at least not for the authors (other colleagues got hit worse by some of the results in this paper). Just days before we submitted what would become our final version, Science published a paper on FoxP in flies that flew in the face of everything published about FoxP genes so far, but apparently without exchanging the genetic background of the mutants, without meticulous controls to rule out motor defects and without even attempting to test for the efficiency of the RNAi procedure. There is a more in-depth treatment of this paper in my previous post. Either way, we have several posters and blog posts to show that we were on dFoxP already several years ago.

Now, finally, more than 2.5 years after the initial first version of the manuscript was submitted to PNAS, our work is finally published in PLoS One and it will be exciting to see if now others can find the mistake we couldn’t find and show us why this is all wrong 🙂

Mendoza, E., Colomb, J., Rybak, J., Pflüger, H., Zars, T., Scharff, C., & Brembs, B. (2014). Drosophila FoxP Mutants Are Deficient in Operant Self-Learning PLoS ONE, 9 (6) DOI: 10.1371/journal.pone.0100648

See this post with the associated press releases on brembs.net.

The Forkhead Box P2 (FOXP2) gene is well-known for its involvement in language disorders. We have discovered that a relative of this gene in fruit flies, dFoxP, is necessary for a type of learning called operant self-learning, which resembles some aspects of language learning. This discovery traces one of the evolutionary roots of language back more than half a billion years before the first word was ever spoken. Intriguingly, dFoxP-function also differentiates between self and non-self, a key process malfunctioning in autism and schizophrenia disorders, in which FOXP2 has also recently been implicated. Finally, dFoxP is also important for habit formation, a common animal model for addiction.

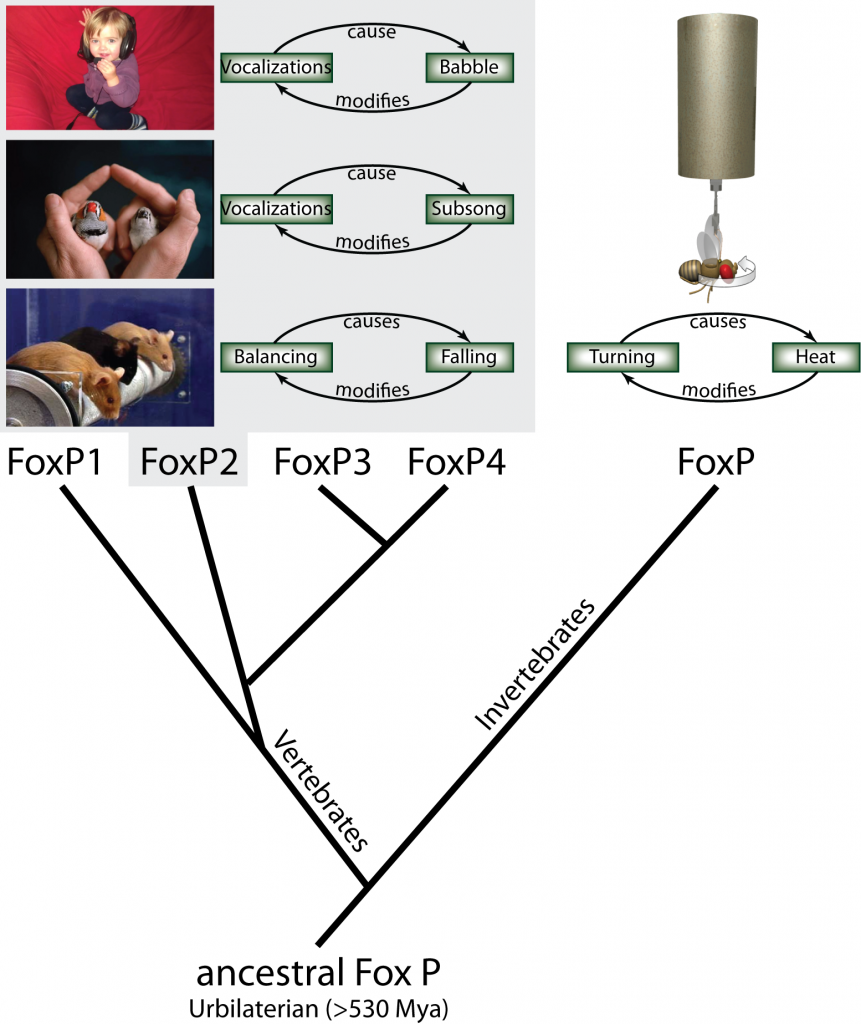

Even though language is so much a part of what it means to be human, the evolution of this strikingly singular trait is still clouded in mystery. Genetic disorders with language impairments are a particularly effective route to uncovering the biological roots of language. Most prominently, one mutation in the FOXP2 gene appears to affect language acquisition in afflicted patients, without other obvious impairments (1). This gene is one of four members of the FoxP gene family which have evolved in vertebrate animals from a single ancestral FoxP gene by serial duplications. In invertebrates, these duplications never took place and thus the single currently existing invertebrate FoxP gene can serve as a model for studying the function of the extinct, ancestral gene (Fig. 1).

Fig. 1: Using operant conditioning to test invertebrate FoxP function. From the single ancestral FoxP gene, four different genes have evolved in the vertebrate lineage through serial duplications, while invertebrates have retained a single copy of the gene. In an operant feedback loop, spontaneous actions are followed by a given outcome as a consequence. Depending on that outcome being desirable or not, the frequency of the action increases or decreases in the future. For instance, vocalizations of a human infant are followed by the perception of the resulting babbling. The deviation from the intended articulation modifies future vocalizations until language is formed. Similarly, in songbirds, the perceived difference between the juvenile bird’s (right) own subsong and the memorized song from an adult tutor (left) modifies future vocalizations until the species-specific adult song is produced. In mice, balancing in the rotorod experiment is followed by eventual falling, which provides the feedback to improve subsequent balancing movements. All three examples have been shown to be dependent on normal FoxP2 function. Analogously, we have tested fly FoxP function by tethering flies and measuring their turning attempts in stationary flight. Some turning attempts (e.g. to the right) are followed by a punishing heat beam, others (e.g., to the left) are rewarded with turning the beam off. Continuous feedback modifies the fly’s turning attempts towards the direction where the heat is off.

Studies on FOXP2 patients revealed apraxia, i.e., the inability to articulate words and sentences, as one major symptom. Evidence from songbirds and transgenic mouse models seems to confirm the suspicion that the function of FoxP2 might be found in the speech component of language (1). More than fifty years ago, the behaviorist B.F. Skinner proposed that language might be acquired through an operant learning process (2): the first more or less random utterances (babbling) of infants are rewarded by their parents and correct utterances more so than incorrect ones. Moreover, just as imitating any movements, the ability to correctly imitate the words of others might be inherently rewarding. Eventually, the infant learns to correctly speak the words required to communicate their needs and affections.

Inspired by the possibility to test for one of the evolutionary roots of language in an invertebrate animal, we used a learning experiment in the fruit fly Drosophila which paralleled the operant concept proposed by Skinner: the tethered animals first produce more or less random behaviors (including turning attempts, left or right) and the experimenter rewards only designated ‘correct’ ones until the animal is spontaneously generating predominantly ‘correct’ behaviors (e.g. left turning attempts; Fig. 1). Importantly, we also used a control experiment, in which the animals’ behavior not only affected whether they would receive the reward or not, but also which color their environment was. Previous results had shown that in this control situation flies tend to learn more about the coloration of their environment than about their own behavior (3). If the function of dFoxP in flies were analogous to that of FOXP2 in humans, we would expect it to be necessary for the first experiment (‘operant self-learning’), but not for the second experiment (‘operant world-learning’).

Ever since Skinner’s proposal, these kinds of experiment had been discussed, but until now they have not been technically feasible. In his critique of Skinner’s proposition, the linguist Noam Chomsky dismissed the idea of operant experiments conceptually paralleling language acquisition as “mere homonyms, with at most a vague similarity of meaning” (4).

In order to be able to attribute any effect of our manipulations in the flies to the dFoxP gene, we used two different strategies. In the first, we tested flies with a mutation in the dFoxP gene in operant self- and world-learning. In the second we used the same two experiments to test flies in which we had experimentally targeted the dFoxP gene such that its expression was reduced. Both methods yielded essentially the same result: dFoxP is necessary for operant self-learning but not for operant world-learning, lending support to the hypothesis that operant self-learning may be one of the evolutionary ancestral capacities which had to exist in order for language to be able to evolve (i.e., an exaptation).

Another parallel between operant and language learning is the fact that prolonged practice leads to an automatization of the movements required. Only when a language is new do we need to think about the pronunciation and articulation of words and sentences. Once we are fluent, we only need to articulate our thoughts. Similarly, other movements can be trained with feedback until they become automated. Riding a bike, writing, tying shoe-laces, etc. are all examples of such automatic behaviors called skills or habits. If the learning mechanism for which dFoxP is required constitutes an exaptation for language acquisition and the speech component of language is a special form of a skill or a habit, then dFoxP mutant flies should be deficient in habit formation. To test this hypothesis, we used dFoxP mutant flies in a prolonged operant world-learning paradigm known to induce habits (5). Further corroborating our hypothesis, these mutant flies showed a severe deficit in habit formation.

In vertebrate animals, mutations in the FoxP2 gene leads to alterations in the brain structure of the affected individuals (1). This is thought to be due to the ability of FoxP genes to alter the expression of other genes, directly involved in brain development. To test if the fly dFoxP gene also is involved in brain development, we reconstructed the three-dimensional structure of the brains of flies with a mutated dFoxP gene in the computer. Using computer-assisted volume analysis, we discovered alterations in the fly brain structure which were too subtle to spot with the human eye, even at large magnifications. These results indicate that in flies as in vertebrate animals, FoxP genes may act as gene regulators during brain development.

Taken together our results provide evidence for a structural and functional conservation of FoxP genes since the split between vertebrate and invertebrate animals. This ‘deep’ homology spans vastly different brain organizations.

Source: Mendoza E, Colomb J, Rybak J Pflüger H-J, Zars T, Scharff C, Brembs B (2014): Drosophila FoxP mutants are deficient in operant self-learning. PLoS ONE: 10.1371/journal.pone.0100648

Raw data: Mendoza, E; Colomb, J; Rybak, J; Pflüger, H-J; Zars, T; Scharff, C; Brembs, B (2013): Drosophila FoxP molecular, anatomical and behavioral raw data. figshare. https://dx.doi.org/10.6084/m9.figshare.740444

REFERENCES.

- Bolhuis JJ, Okanoya K, Scharff C (2010) Twitter evolution: converging mechanisms in birdsong and human speech. Nature Reviews Neuroscience 11:747-759.

- Skinner BF (1957) Verbal Behavior (Copley Publishing Group).

- Brembs B, Plendl W (2008) Double dissociation of PKC and AC manipulations on operant and classical learning in Drosophila. Current Biology 18:1168-1171.

- Chomsky N (1959) A Review of B. F. Skinner’s Verbal Behavior. Language 35:26-58. Available at: https://cogprints.org/1148.

- Brembs B (2009) Mushroom bodies regulate habit formation in Drosophila. Current Biology 19:1351-5.